XRHands synchronization

このモジュールは、XR Handsの手(フィンガートラッキングを含む)の状態を同期する方法を示します。

手は以下のどちらかの方法で表示できます。

- 掴んでいるコントローラーから受け取るデータ

- フィンガートラッキングのデータ

フィンガートラッキングのデータに関しては、帯域消費を削減するために手のボーンのデータを高圧縮する方法を示しています。

備考:

このアドオンは、OpenXR対応(Oculus QuestやApple Vision Proなど)を別にすれば、Meta OVR hands synchronizationに似ています。

ただ、このアドオンにいくつかの改良点と、ジェスチャー検知ツールも追加されています。

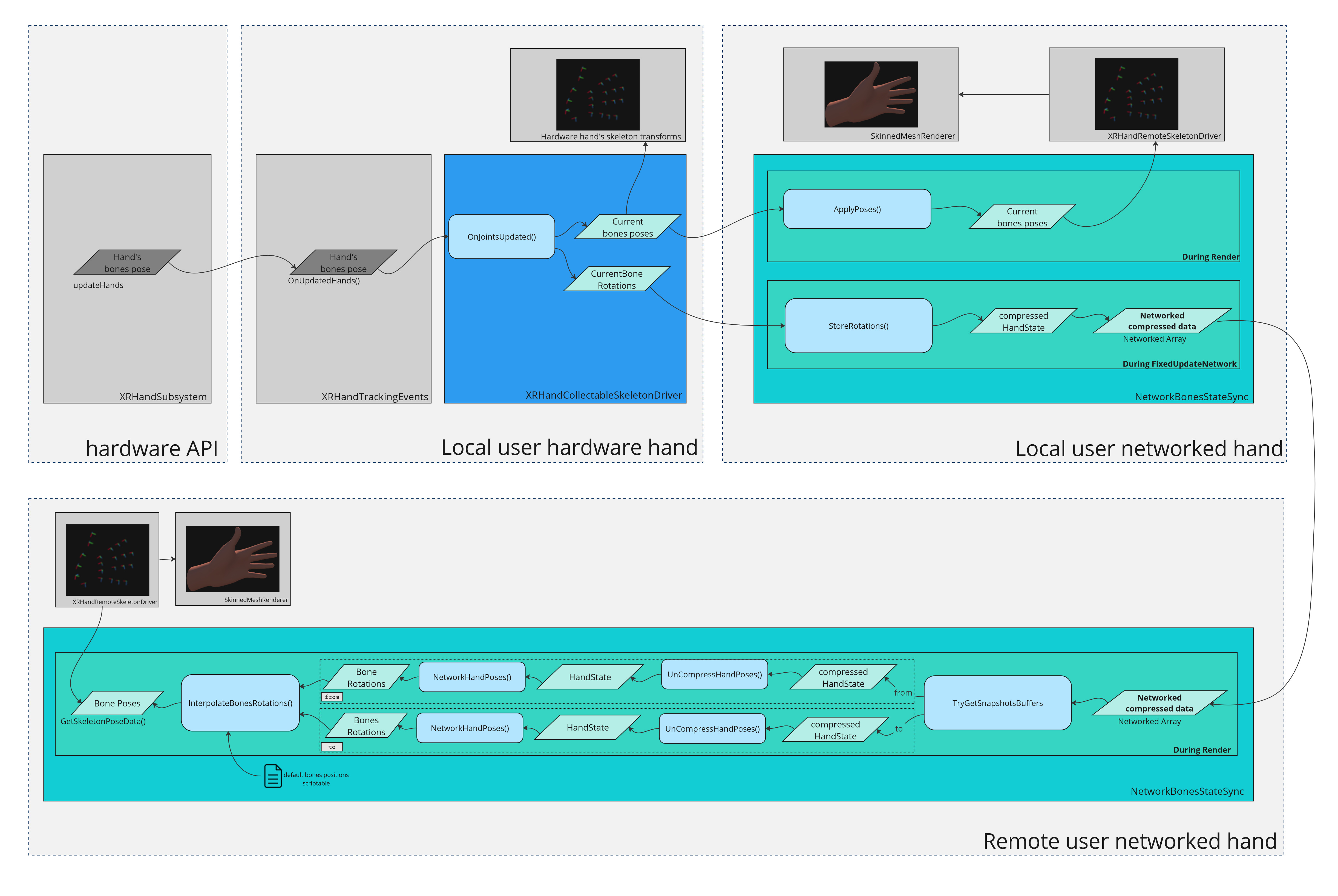

手のロジック概要

このアドオンは、コントローラーベースのハンドトラッキングとフィンガーベースのハンドトラッキングが共存できます。一方からもう一方へシームレスに移行できるようにすることが目的です。

- 他のVRサンプルのように、コントローラーを使用する場合、手のモデルはOculust Sample Frameworkで表示されます。

- フィンガートラッキングを使用する場合、ローカルユーザーでは、XRHandsの

XRHandSkeletonDriverコンポーネントを継承したXRHandCollectableSkeletonDriverが手と指のボーンデータを収集して、ローカルのスケルトンにそれらを適用します。スケルトンは、ローカルのみで表示するメッシュに使用されます(デフォルトのプレハブでは、リモートユーザーに同じネットワークバージョンの手を表示するかわりに、表示を隠しています)。 - リモートユーザーでは、

NetworkBonesStateSyncコンポーネント(後述)が手と指のボーンの回転データを復元して、手のスケルトンのtransformを動かし、それ自体によってSkinnedMeshRendererで適切な手の描画を行います。 Render毎に、NetworkBonesStateSyncはFusionの補間データを使用して、2つの受信した手の状態間で手のボーンの回転をスムーズに補間し、2ティック間でボーンの表示もスムーズに行われます。

ローカルのハードウェアリグの手コンポーネント

ローカルユーザーのハードウェアリグの手の階層にあるXRHandCollectableSkeletonDriverコンポーネントは、手の状態を収集して同期を行います。手の状態(特に指のボーンの回転を含む)にアクセスするため、XRHandsの親クラスXRHandSkeletonDriverコンポーネントに依存していて、兄弟のXRHandTrackingEventsコンポーネントが必要です。

また、これには現在フィンガートラッキングが使用されているかを知るための補助プロパティも含まれています。

備考:

XRHandCollectableSkeletonDriverには分析コードがあり、手の状態を同期するために必要なScriptableObjectのために、デフォルトのボーン位置を探し、ボーンの回転の圧縮表現を提案します。これは後述の「ネットワークリグの手コンポーネント」で説明します。

大抵のニーズに合ったデフォルトのScriptableObjectと分析コードはアドオンで提供されているため、手のボーンの変更が多くなければ、使用する必要はありません。

ネットワークリグの手コンポーネント

ローカルユーザーでは、ユーザーのネットワークリグのNetworkBonesStateSyncコンポーネントが、ローカルのハードウェアの手からIBonesCollecterインターフェースを実装したコンポーネントを探し、ローカルの手の状態を見つけ、それらをFixedUpdateNetwork内でネットワーク変数に保存します。

IBonesCollecterインターフェースはXRHandCollectableSkeletonDriverコンポーネントで実装されていて、以下を提供します。

- フィンガートラッキングの状態

- 手のボーンの回転

リモートユーザーでは、その同期データをパースしてRender内でローカルの手の状態を再構築します。

ネットワークを介して同期される回転データに加えて、BonesStateScriptableObjectはボーンのローカルの位置(不変)を提供します。

アドオンは、XRHandの手のボーンのローカルの位置を定義するLeftXRHandsDefaultBonesStateとRightXRHandsDefaultBonesStateアセットを含みます。

いつでも各ボーンの回転をスムーズに見せるため、NetworkBonesStateSyncは2つの受信した状態間のボーンの回転を補間します。これは、TryGetSnapshotsBuffers(out var fromBuffer, out var toBuffer, out var alpha)を使用して、補間したいボーンの回転の「from」と「to」を探しています。

手のポーズは、ネットワークの手にあるIBonesReaderを実装したコンポーネント(XRHandsのXRHandSkeletonDriverを継承したXRHandRemoteSkeletonDriverなど)によって、ローカルのスケルトンに適用されます。

最後に、SkinnedMeshRendererがこのスケルトンを使用して、適切に手を表示します。

帯域幅の最適化

ボーン情報の転送は非常に高コストで、同期する手の状態には24個のQuaternionが含まれます。

NetworkBonesStateSyncコンポーネントは、手のボーンと同じプロパティ(特定の回転軸、可動域の制限など)を使用して、必要な帯域幅を削減しています。各ボーンの精度は、専用のHandSynchronizationScriptableで指定できます。

NetworkBonesStateSyncのhandSynchronizationScriptable属性が与えられていなければ、デフォルトの圧縮が使用されるため、アドオンで提供されているLeftHandSynchronizationとRightHandSynchronizationアセットのものよりも効率が下がります。これらは、大抵のニーズに合うように作られています。

- 圧縮率が非常に高く、約20分の1のバイトを使用する(手のボーンの回転を、完全な

Quaternionを386bytesで転送するかわりに19bytesで保存します) - 圧縮によって、リモートユーザーの手の表現に悪影響を与えない

手のモデルの切り替え

HandRepresentationManagerコンポーネントは、現在使用中のハンドトラッキングモードに基づいて、どの手のメッシュを表示するかを制御します。

- コントローラートラッキング使用時は、Oculus Sample Frameworkの手のメッシュ

- フィンガートラッキング使用時は、

XRHandSkeletonDriverコンポーネントのロジックによってアニメーションするボーンのスケルトンに依存するメッシュ

このスクリプトには2つのバージョンが存在し、1つはローカルハードウェアの手(手のスケルトンのアニメーションでの使用、ローカルの当たり判定目的、またはオフラインの手が必要な場合)、1つはネットワークの手です。

ローカルのハードウェアリグの手

このバージョンは、ハードウェアの手にあるIBonesCollecter(XRHandCollectableSkeletonDriverなど)に依存しています。

他の機能に加えて、2つのグラブのCollider間の切り替えの確認も行います。1つはフィンガートラッキング使用時の掌のローカルの位置、1つはコントローラーベースのトラッキング使用時の掌のローカルの位置です。(2つのモード間で、手の「中心」は全く同じ位置ではありません)これは、2つの人差し指先のColliderと同じです。

備考:現在の設定では、ネットワークの手の表示のみが選択されます。ハードウェアリグの手は、ボーンの位置を収集して、適切に人差し指のColliderの位置をアニメーションするためだけにあります。したがって、ハードウェアの手に使用されるマテリアルは透過です(描画が無効になっている場合は、アニメーションでボーンは動きません)。ハードウェアの手のメッシュは、HardwareHandRepresentationManagerのMaterialOverrideModeがOverrideに設定されていれば、overrideMaterialForRenderersフィールドで与えられた透過マテリアルによって、自動的に透過に設定されます。

ネットワークリグの手

NetworkHandRepresentationManagerコンポーネントは、手のモードに基づいて、どのメッシュを表示するかを制御します。コントローラートラッキング使用時はOculus Sample Frameworkの手のメッシュ(フィンガートラッキングのステータスを同期しているNetworkBonesStateSyncのデータをチェックします)、またはNetworkBonesStateSyncロジックに依存したメッシュです。

Avatarアドオン

このプロジェクトでAvatarアドオンを使用する場合は、アバターのスキンの色に基づいて手の色を適用することが可能であることを確認してください。

HandManagerAvatarRepresentationConnectorの2行目#define AVATAR_ADDON_AVAILABLEのコメントを外す- 各

NetworkHandRepresentationManagerやHardwareHandRepresentationManagerにHandManagerAvatarRepresentationConnectorをつける

ジェスチャー認識

フィンガートラッキングデータを補助するため、手のポーズの解析に役立ついくつかのヘルパースクリプトが提供されています。

FingerDrivenGestureはそれらのスクリプトのベースで、OpenXRの手のサブシステムの接続や、トラッキングロストのコールバックを制御します。

FingerDrivenHardwareRigPoseは、通常のコントローラーのボタン(ユーザーが掴んでいるか・指をさしているかなどを決定する)による手のコマンドを処理します。これは掴みや、中指と薬指のピンチや押下を検知します(両方のオプションを同時に有効にすることが可能で、その中の1つのみがHardwareHandのisGrabbingをトリガーします)。

FingerDriverBeamerは、「銃」のポーズ(人差し指と親指を立てる)を検知して、RayBeamer(テレポート用)をトリガーします。一度有効になると、親指を立てている間(人差し指はチェックされません)は、ビームが有効になり続けます。

FingerDrivenMenuによって、ユーザーが短時間手を見た時にメニューがポップアップし、ヘッドセットが手の方を向いている間はメニューが更新され続けます。

ToucherのサブクラスのPincherはピンチ動作を検知し、IPinchableインターフェースを実装しているオブジェクトに通知します。オブジェクトはTryConsumePinchでピンチ動作を「消費」できて、1つのピンチ動作で1つのアクションのみがトリガーされることを確実にできます。

デモ

デモシーンはAssets\Photon\FusionAddons\XRHandsSynchronization\Demo\Scenes\フォルダーにあります。

依存関係

- XRShared addon 2.0

ダウンロード

このアドオンの最新バージョンは、Industries アドオンのプロジェクトに含まれています。

また、無料のXR アドオンのプロジェクトにも含まれています。

対応するトポロジー

- 共有モード

更新履歴

- Version 2.0.6:

- NetworkHandrepresentationManager can now specify a material for the local user

- Fix to prefab's index tip position

- Version 2.0.5:

- Allow to work with a IBonesCollector not providing bones positions

- Allow to skip automatic hardware hand wrist translation (useful for XRIT rigs)

- Version 2.0.4: Allow to disable tip collider in finger tracking

- Version 2.0.3: Ensure compatibility with Unity 2021.x (box colliders, edited in 2022.x, in prefab had an improper size when opened in 2021.x)

- Version 2.0.2: Add define checks (to handle when Fusion not yet installed)

- Version 2.0.1: Change transparent material (unlit shader)

- Version 2.0.0: First release