Performance Tests

Performance tests, aka load tests, are a useful and highly recommended way to verify if your server-side implementations and your hardware can actually handle the expected load.

What to expect?

We are often asked a simple question: how many concurrent users (CCU) can a single Photon server handle?

Unfortunately, the answer is not that simple. It depends on several factors, for example:

- number of clients per game room

- message rates (combined: the number of messages per room and second )

- message sizes

- protocol type (reliable / unreliable)

- client platform

- server hardware specs

- etc.

In a typical use case, we have ~ 200 msg / room / s.

This is a standard configuration:

- Quad core CPU (e.g. Intel Xeon E3-1270 v3, 3.5GHz).

- 8 GB RAM.

- 1 GBps NIC / uplink port speed.

Photon can handle 2000 - 3000 CCU per server in a standard configuration.

The bottleneck is usually not the CPU, but the NIC / traffic. Traffic pools are usually more expensive than CPU, so it is often more efficient to use smaller servers.

In general, bare metal servers give better results than VMs.

However, the numbers above are only a really rough estimation of what to expect. You need to do some tuning and run load tests with your own code base to get reliable numbers for your own use case.

Identify the Test Scenario

Before you run a load test, describe your use case and make an upfront calculation.

What hardware spec do you have for your servers?

How many CCU do you expect for your game?

What type of game do you have, how is the matchmaking done, do you have different servers for different functionality?

Are you using the unmodified code from the Photon Server SDK, or do you have custom code? If so - what are the main changes?

How many clients per room?

How many messages are sent?

- Example: 4 clients / room, which call RaiseEvent() every 100ms

- Incoming: 4 * 10 msg / s = 40 msg / s

- Outgoing: 4 * 40 msg / s = 160 msg / s

- Total: 200 msg / room / s

- Example: 4 clients / room, which call RaiseEvent() every 100ms

What is the average message size?

- Example:

- 200 msg / room / s * 200 byte = ~ 40 kByte / s / room

- expected: 1000 CCU / 250 rooms => ~ 10 Mbyte / s in total

- Example:

With the numbers from the example above, you are already close to the upper limit of a 100 MBit/s uplink port speed.

Several client libraries have built-in "Network Traffic Stats" that should help you to answer these questions.

Build a Test Client

Photon is optimized to handle large amounts of connections and traffic. However, the client SDKs and libraries are not intended to be used in load tests. The client-side can not be optimized the same way as Photon.

In most cases, the implementation of your "game client" is finished when you want to run a load test. So you might think that it is easy to re-use your real-world game client for a load test. But if you try to build a "load test client" with Photon's Client SDKs, the clients will be the bottleneck and you will get bad, misleading results.

Building a good test client is the real challenge for a load test.

We recommend the following approach:

- Note down the typical sequence of operations / events that is sent by your game client.

- Build a simple Photon Server(!) application that mimics the behavior of your game client.

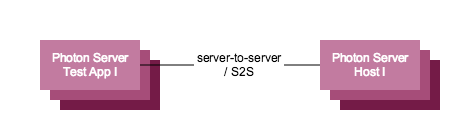

- Use Photon's sever-to-server features to establish the connection between your "S2S load test application" and the "sever-side" Photon.

Configure Photon

Photon / PhotonServer.config:

- Use the default configuration and don't make any changes.

- The default configuration is optimized for a broad range of use cases. Any changes might have unforeseen impacts and will make it harder to understand and analyze the test results.

Logging / log4net.config:

- Make sure that you are using the "INFO" log level in all log4net.config files.

- Excessive logging on the "DEBUG" level has a hugh performance impact and needs to be avoided!

- Learn more about logging here: https://logging.apache.org/log4net/release/config-examples.html

Run Your Tests

- Host your Photon S2S load test client on separate, physical machines.

- As a rule of thumb you need at least two "S2S load tests machines" for each "server-side" Photon to distribute the load evenly.

Before you run the tests, make sure to install performance counters and create performance counter logs, on the server-side as well as on the client machines:

- stop Photon if it's running

- from Photon Control -> Performance Counters -> Install Counters, Create Logging Set

- start "perfmon" from command line. Under "data collector sets" -> "User Defined": choose the photon_perf_log in the RIGHT window pane -> Properties -> set the sample interval to 1 second (the default of 1 minute does not give us enough data here)

- start Photon

- Photon Control -> Performance Counters -> Start Logging

- run load test

- Photon Control -> Performance Counters -> Stop Logging

- get performance logs from C:\perflogs\admin

- get log files from \deploy\bin_win64\log and \deploy\log

If counter log creation from Photon Control fails, run ths command from \deploy\bin_tools\perfmon:

logman.exe create counter photon_perf_log -si 00:01 -v mmddhhmm -cf logman.config.txt

Analyze Performance and Identify Bottlenecks

To analyze the load, load the performance counter log in perfmon.

In the "doc" folder of the Photon Server SDK, there is a "photon-perfcounter.pdf" file where all counters are listed and shortly explained.

Estimate the "overall" load: For a first estimation, look at "obvious" counters, like: CPU load, number of Peers / Connections Active, memory usage per process, disconnects, network traffic (Bytes in / out / total) etc.

These counters should give you an idea if the server handles the load as expected or if you have any bottlenecks, which need further investigation.

Outgoing traffic (are the clients busy?):

As mentioned above, the client side is often the bottleneck. Check how well the "outgoing" traffic is handled, like the amount of Commands Resent / sec (if clients don't send ACKs, Photon resends the command), the amount of Queued Reliable Commands (the amount of commands that are sent, but not yet acknowledged) and so on.

If these values are high, it is very likely that you have a client-side problem.

You should check these counters in any case, and if you identify a cliend-side bottleneck, resolve it and re-run the test.

Incoming traffic (is the server busy?):

Check if the server is busy, by comparing how many of the "active" I/O, Business and ENet Threads are currently "processing", if there are messages in the ENet Queue etc.

These counters often correlate to the CPU / memory usage counters, so there is often not that much additional knowledge to gain from them.

Need Support?

If you need support or have questions, contact us.

Include as much information as possible, especially:

- all the answers from the "Identify the test scenario" - section

- all log files and performance counter logs from the "Run Tests" section